The new text-to-image diffusion model Flux is destroying all open-source and black box models. This model has been released by Black Forest Labs. Trained with 12 billion parameters based on multimodal and parallel diffusion transformer block architecture.

FLUX : Installation is Here !! 😍

Tested both models: 🤪

📌Flux DEV

📌Flux SchnellWatch till the end…

Workflow Included 😀🥰👇 pic.twitter.com/3tjjfkF0Ao

— Stable Diffusion Tutorials (@SD_Tutorial) August 3, 2024

Flux Schnell is registered under the Apache2.0 license whereas the Flux Dev is under non-commercial one. Want to test for your commercial projects? Then just switch to their API docs.

Currently, these are the text-to-image models:

- Flux.1 Pro can be accessed using API. This model is a raw version designed for testing purpose.

- Flux.1 Dev, for high-end GPU users with more than 12GB VRAM and 32GB system RAM. It is the guidance distilled model of Flux Pro that is more powerful than the standard one. The output generation can be used for personal, scientific, and commercial purposes as described in the flux-1-dev-license.

- Flux.1 Schnell, for GPU users with 12GB VRAM or lower. Well, “Schnell” means fast in the German language. This model (for local development, personal, and commercial use) is capable of generating decent-quality images in only 1 to 4 steps.

|

| Source: Black Forest Labs |

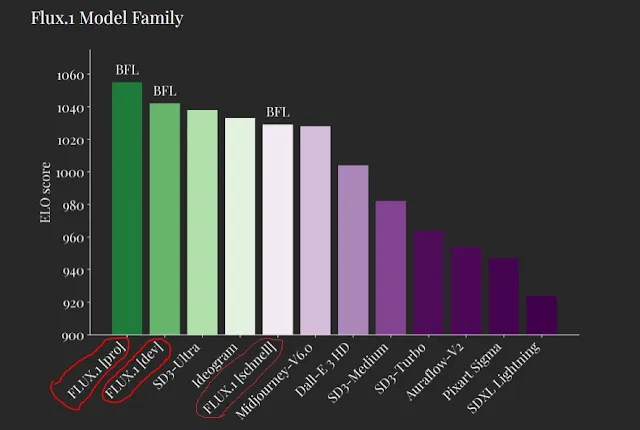

The tested results by the Black Forest Labs show how the model outperforms other renowned models like Stable Diffusion 3 Ultra, MidjourneyV6.0, and Dalle3(HD).

Here, the Flux.1 Pro model with ELO score(~1060) surpasses all the text-to-image models, followed closely by FLUX Dev(~1050). SDXL Lightning is the least of all performers with ELO scores (~930).

After huge confusion in the community, it is clear that now the Flux model can be trained on LoRA locally, you can also follow the walk-through tutorial. Currently, there is no support for Automatic1111. Let’s move to the installation process in ComfyUI and ForgeUI.

Installation in ComfyUI:

There are multiple variants of the official version released by the community. We have listed all types of Flux variants. You should choose the one which suits your machine.

TYPE A: Official Release by Black Forest Labs

1. Install ComfyUI into your machine.

2. Move to ComfyUI Manager and click “Update ComfyUI” to avoid errors. Then just restart and refresh it.

3. Download the respective models as per your machine requirements. But for illustration purposes, we have downloaded both.

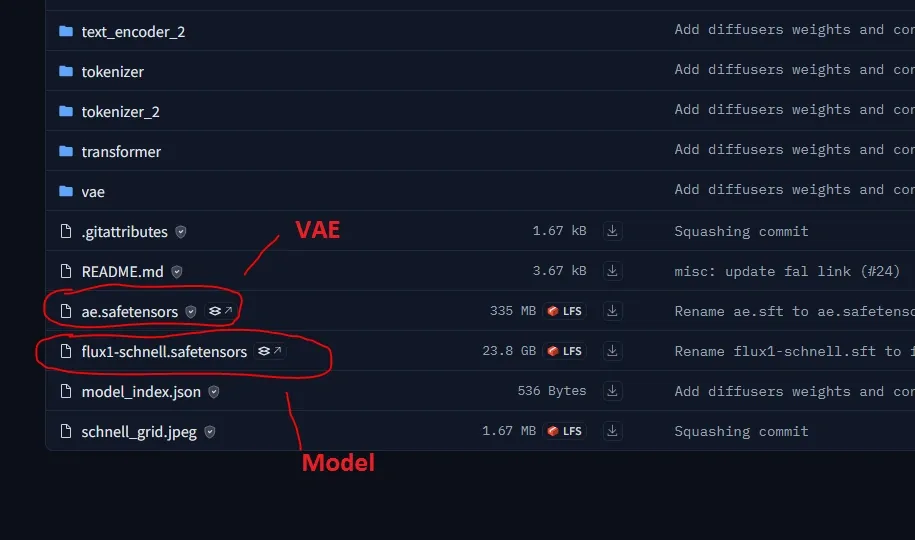

(a) Download Flux.1 Dev, if you have a high-end GPU with more than 12GB VRAM.

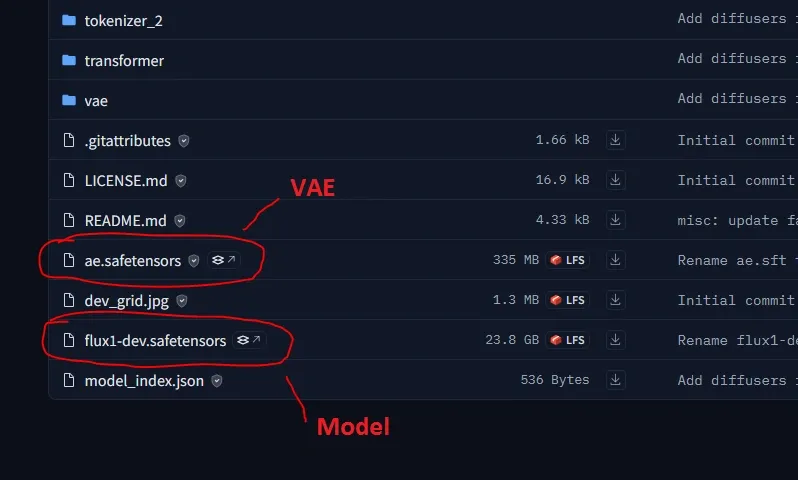

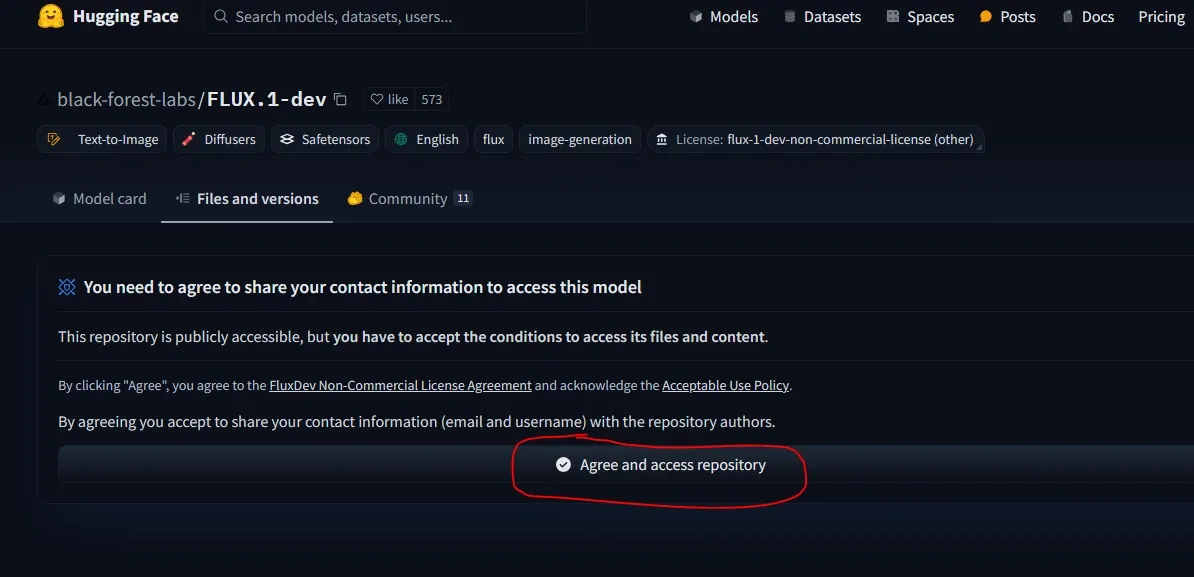

To download this model, log in to Hugging Face and agree to their terms and agreements. After downloading, save it inside the “ComfyUI/models/unet” folder.

You also need VAE (ae.safetensors file) which can be downloaded from there. Save it into the “ComfyUI/models/vae” folder.

We tested this model on Colab 12GB VRAM free tier and its render time was 7 minutes 40 seconds.

(b) Download Flux.1 Schnell, for low end GPUs can run on 12GB VRAM. Save this inside “ComfyUI/models/unet” folder.

You need VAE(ae.safetensors file) which can be downloaded from there as illustrated in the above image. Save it into the “ComfyUI/models/vae” folder.

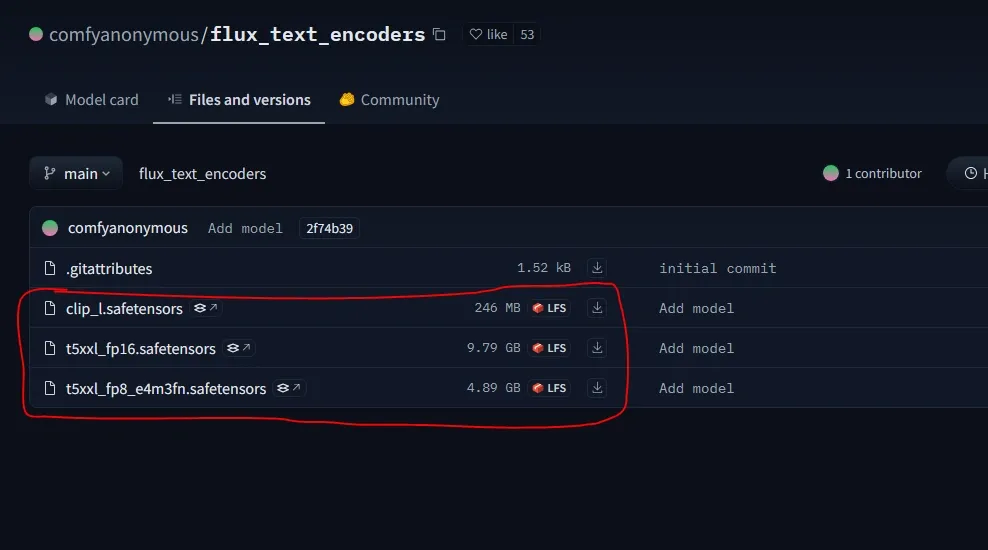

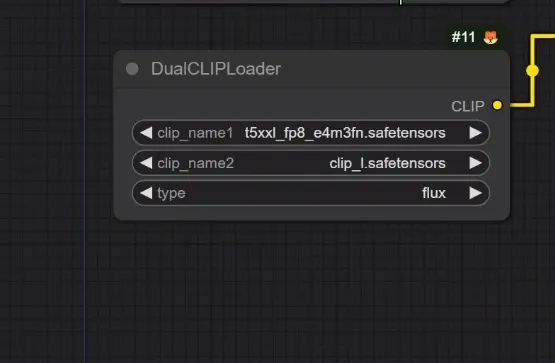

4. Next, you will need the Clip models (clip_l.safetensors and t5xxl_fp16 for more than 32GB System RAM or t5xxl_fp8_e4m3fn for lesser VRAM). You should use the FP8 clip model if you getting out of memory error.

So, just download it from the Hugging Face repository and save them inside the “ComfyUI/models/clip” folder.

That’s it now move into the workflow section.

TYPE B: The Flux GGUF Quantized version

This is the Quantization Flux version produces the qulaity without compromising the extensive image quality supported by ComfyUI.

This also consumes low GPU consumption with lesser rendering time. Specially the GGUF Loader works on GPU to improve the overall performance of your VRAM. T5 text encoder is also included to lower the VRAM power consumption.

1. First install ComfyUI into machine.

2. Now, click on “Update All” to Update from ComfyUI Manager.

3. Move to “ComfyUI/custom_nodes” folder. Navigate to folder path location and type “cmd” to open command prompt.

4. Then just clone the repository by copying and paste into command prompt provided below:

git clone https://github.com/city96/ComfyUI-GGUF

5. Again, move back to root “ComfyUI_windows_portable” folder. Navigate to folder path location and type “cmd” to open command prompt.

Use these command to install the dependencies:

.python_embededpython.exe -s -m pip install -r .ComfyUIcustom_nodesComfyUI-GGUFrequirements.txt

6. There are multiple models listed into the respective repository.

Download any one of the the Pre-quantized models for both the models:

(a) Flux1-Dev GGUF

Save them into the “ComfyUI/models/unet” directory.

All the clip models already handled by the CLIP loader. So, downloading this is not required.

But if you want you can download as per your GGUF (t5_v1.1-xxl GGUF )models from Hugging Face and save it into “ComfyUI/models/clip” folder.

6. Then restart and refresh ComfyUI to take effect.

7. Move to the workflow section to download it.

TYPE C: FLUX By Kijai

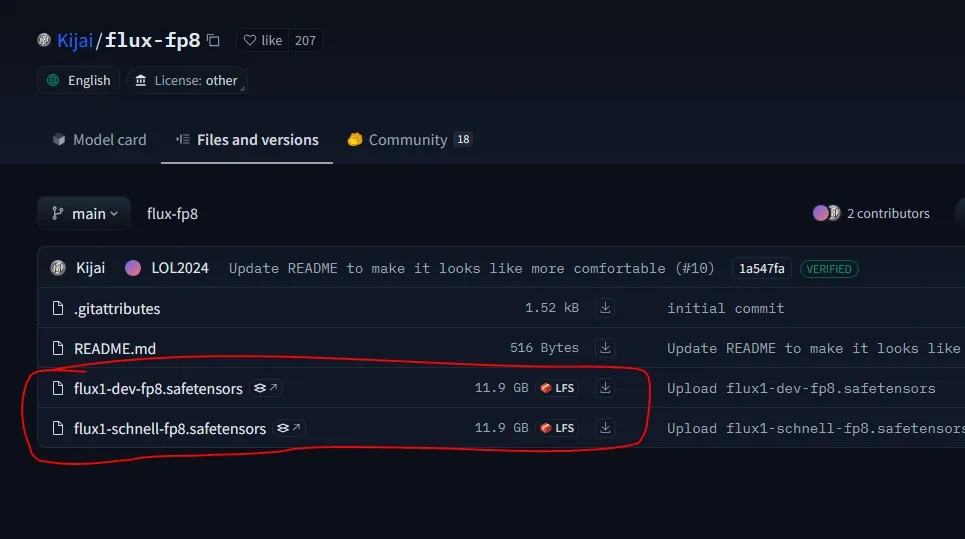

It is the option for lower-end GPU users from another developer “Kijai” who released the compressed form of Flux Dev and Flux Schnell models in FP8bit versions.

1. Install ComfyUI into machine.

2. Users who cannot run the official models can download these from his Hugging Face repository but it will have some image quality reduction.

3. You also need the respective VAE(ae.safetensors file) that can be downloaded as we described in section in TYPE A section (a) for Flux Dev and (b) for Flux Schnell. Then put it into the “ComfyUI/models/vae” folder.

4. You will need the Clip models (clip_l.safetensors and t5xxl_fp16 for more than 32GB System RAM or t5xxl_fp8_e4m3fn for lesser VRAM). You should use the FP8 clip model if you getting out of memory error.

So, just download it from the Hugging Face repository and save them inside the “ComfyUI/models/clip” folder.

5. Now, move to the workflow.

Workflow Explanation:

1. Text-To-image:

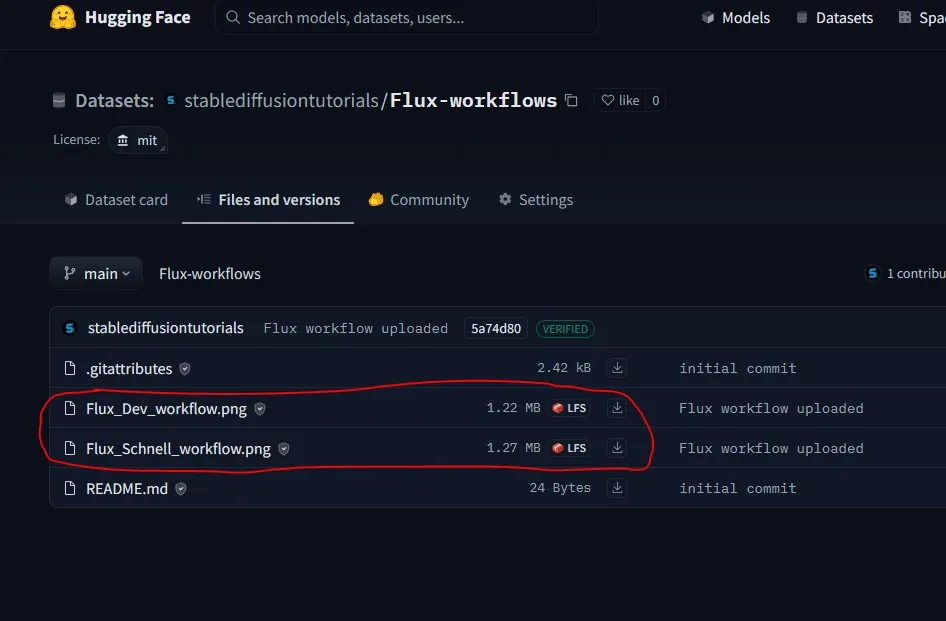

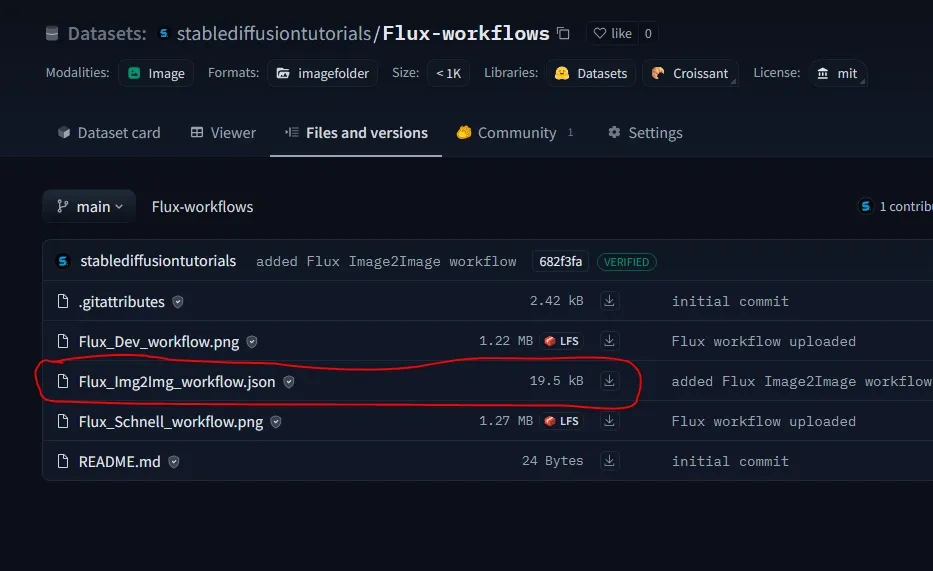

1. There are different workflows for different types of Flux variants that can be downloaded from Hugging Face Repository.

- (a) For TYPE A – Flux_Dev_workflow or Flux_Schnell_workflow

- (b)For TYPE B- Flux_GGUF_workflow

- (c) For KIJAI FLUX – Use same as for TYPE A

2. Now, directly drag and drop the workflow into ComfyUI.

Now, many are facing errors like “unable to find load diffusion model nodes“. This is due to the older version of ComfyUI you are running into machine. Just switch to ComfyUI Manager and click “Update ComfyUI“.

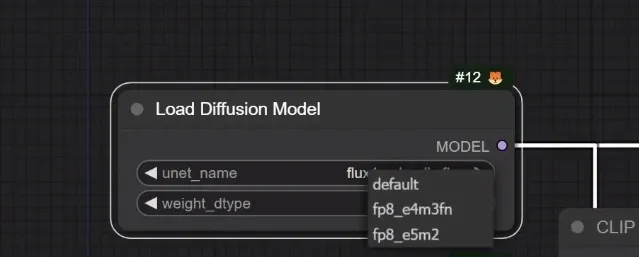

3. Into the Load diffusion model node, load the Flux model, then select the usual “fp8_e5m2” or “fp8_e4m3fn” if getting out-of-memory errors. The default option is the “fp16” version for high-end GPUs.

4. Select the downloaded clip models from the “Dual Clip loader” node. Lower VRAM users should choose the fp8 version (but will impact the image quality) and higher VRAM users can choose the fp8 or fp16 version.

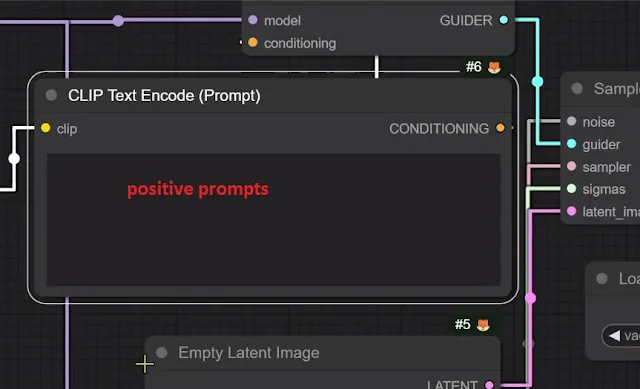

Put your positive prompts. Remember, there is no negative prompt box. So, you need to handle that from positive prompts by inputting the detailed prompts.

5. Recommended settings you need to choose:

For Flux Schnell-

- Sampling Method: Euler

- Steps: 1-4

- Dimensions: 1024 by 1024

- CFG: 1-2

For Flux Dev-

- Sampling Method: Euler

- Steps: 20-50

- Dimensions: 1024 by 1024

- CFG: 3.5

|

| Generated using Flux Dev model |

|

| Generated using Flux Schnell model |

Prompt used for both models: black forest toast spelling out the words ‘TASTY’, tasty, food photography, dynamic shot

The output has real detailing with all sprinkles making it more realistic. The shining effect on the sauce made it more of a professional shot. Using Flux Dev we got at the first attempt what we were expecting, but for Flux Schnell we ran thrice to get the perfect result.

You can try our Prompt Generator for Stable Diffusion prompts.

This time for testing, we ran Flux Dev and Flux Schnell model on NVIDIA RTX4060Ti with 16GB VRAM. The generation time was 1 min 31 seconds (fp 16bit) and 50 seconds(fp8 bit).

Now, let’s try something with human faces.

|

| Generated using Flux Schnell model |

Prompt used: a beautiful asian model, teal colored short hair, wearing transparent glasses, red lipstick, professional makeup, night life, professional photoshoot, 32k

Amazing, the face looks very realistic having professional lighting and high detailing added to it. The hair is really managed, with every fine detail. Look into her eyes having neutral shining effects.

The flux model has understood the context and added the nice busy lifestyle. The blurred light background is out of focus giving the simple touch of a cityscape urban theme.

Again, let’s test something with prompt adherence using Flux Dev model.

|

| Generated using Flux Dev model |

Prompt used: a tiny astronaut hatching from an egg on the moon

Let’s try something like complicated typography.

|

| Generated using Flux dev model |

Prompt used: a robotic machine with text label “FLUX” holding a sign board painted with text “I don’t like Negatives”

Interestingly, as confirmed on the official page the results were more refined in the Flux Dev model than the Flux Schnell.

The prompt understanding, prompt adherence, typography, and scene complexity with detailing are great when compared with other older diffusion-based models.

2. Image-To-Image:

First of all, to work with the respective workflow you must update your ComfyUI from the ComfyUI Manager by clicking on “Update ComfyUI“. This will avoid any errors.

The image-to-image workflow for official FLUX models can be downloaded from the Hugging Face Repository.

Installation in ForgeUI:

1. Install ForgeUI if you have not yet.

2. There are basically two variants of Flux Dev released officially by Forge Developer. Another one is the Flux Schnell variant from third party developer. You need to use it as per your system requirements.

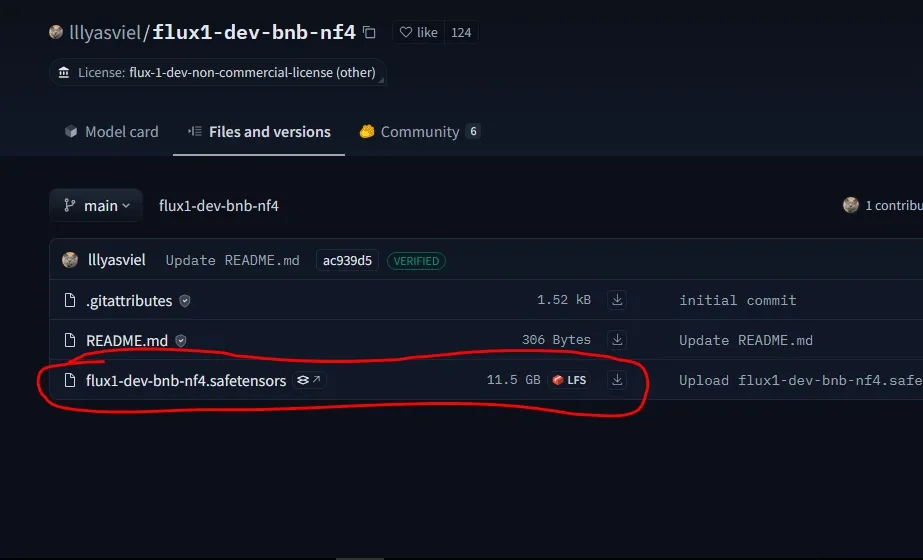

- Download Flux Dev NF4 version from the Hugging Face repository, if you have an NVIDIA RTX 3000/4000 series GPU card. The users having CUDA greater than 11.7 version can use this model. As we have the 4000 series GPU card, we have downloaded this model.

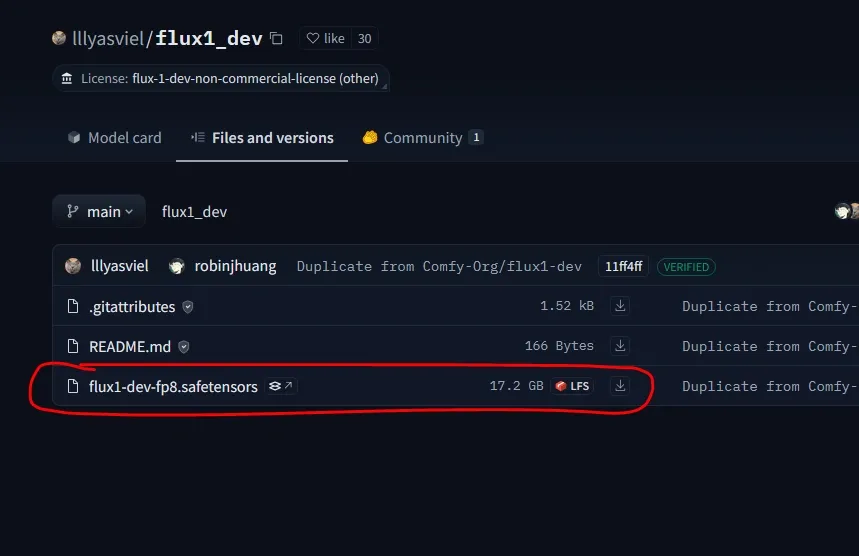

- Download Flux Dev FP8 version from the Hugging Face repository. This is for mostly users with NVIDIA GTX 1000/2000 series cards.

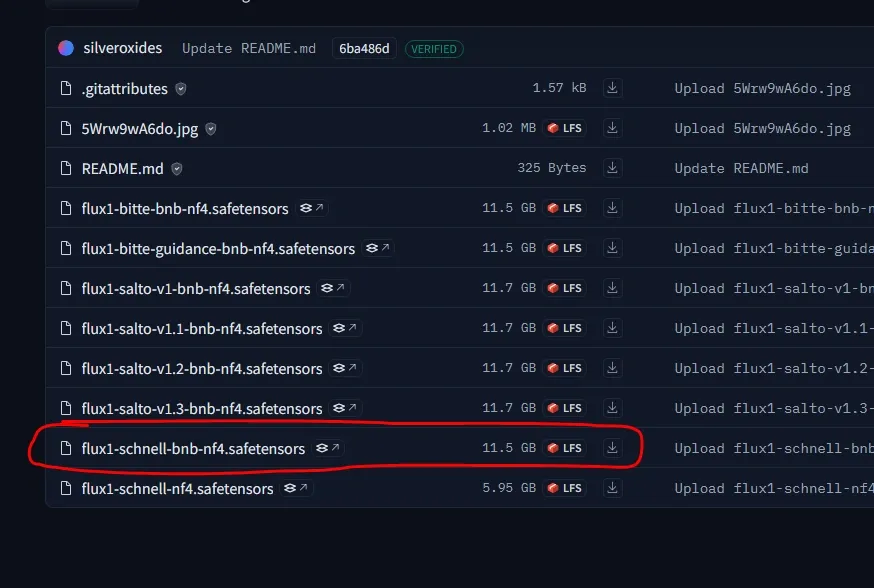

- Download Flux Schnell NS4 from Hugging Face repository. This is again supported in RTX 3000/4000series GPU cards.

3. After downloading, save it inside “Forge/webui/model/Stable-diffusion” folder.

4. Then, move to the Forge Installation folder, and click on the “update” bat file to update it. Now, restart your ForgeUI using “run” bat file.

Workflow Explanation:

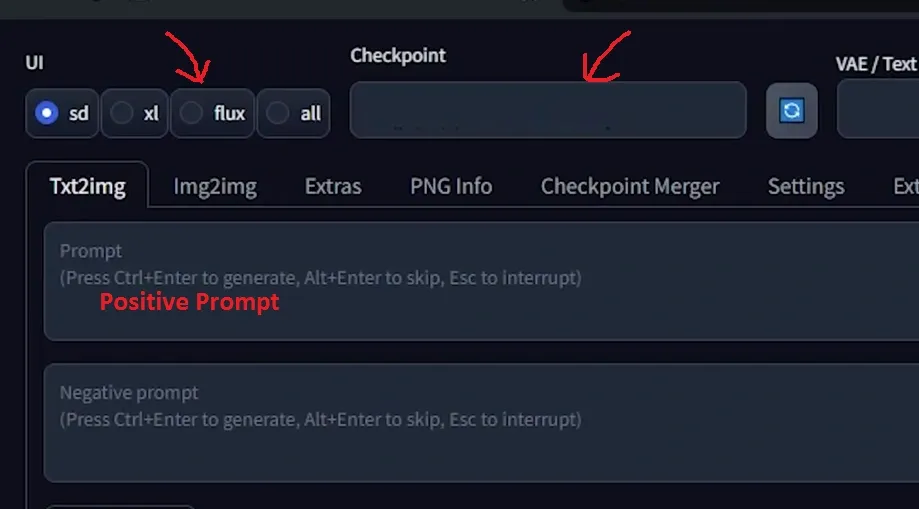

1. Open your Forge WebUI, then select the “Flux” option.

2. Select your downloaded model (“flux-dev-bnb-nf4” or “flux-dev-fp8” or “flux-schnell-bnb-nf4“) from the checkpoint drop-down option.

3. Put your positive prompts. Flux doesn’t have the negative prompt feature. So, be creative to manage that as well in your prompting.

Recommended settings you should use for :

Flux Dev

- Sampling Method: Euler

- Steps:20-50

- CFG:3.5 (default)

- Dimensions: 1024 by 1024

Flux Schnell

- Sampling Method: Euler

- Steps:4

- CFG:1

- Dimensions: 1024 by 1024

4. Hit the “Generate” button to start your image generation.

Here, we are using NVIDIA RTX4060Ti with 16GBVRAM and the render time took 53 seconds for Flux Dev NS4 variant, generating 1024 by 1024 dimensions image with 20 Sampling Steps.

Using API:

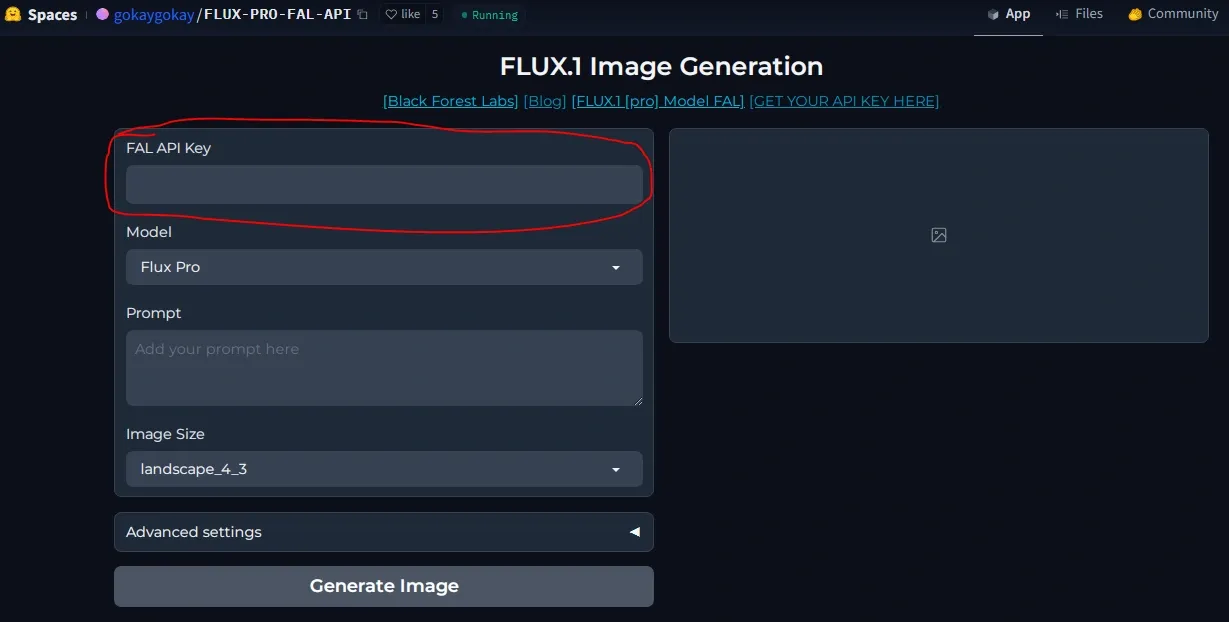

1. As reported, Flux Pro can’t be downloaded by open users. To test this model, you need to first create your API key from FalAI(partner of Black Forest Labs ) and inject it into the Flux Pro gradio based WebUI hosted by Hugging Space.

2. Finally, click “Generate Image“.

Conclusion:

The Flux is really the most powerful model as compared to the older diffusion models. Finally, after certain tests, we can conclude that Flux Dev is more effective than the Flux Schnell model. Now its available in ComfyUI, Forge and SwarmUI.